You can read Part 1 of the 2021/2022 in review series here 🙂

This part will focus on the workshops that were planned and delivered over the course of the 2021/22 academic year. I won’t go into too much detail about all the workshops, but I will talk about the rationale behind the workshops, how I feel they went, and the feedback that I got.

How were workshops determined?

The workshops for each term were determined through a cycle of needs identification, the creation of a list of ‘potential’ workshops, teachers choosing which they want, and then planning and delivering the chosen workshops.

Last year the majority of needs identified and workshops created were top-down determined; that is, management decided what the needs of teachers were (and we mostly focused on general needs and needs of the academy) and the teachers had very little choice regarding which workshops they would like to see in the development programme. I identified this as something I wanted to change, and so we flipped everything! The needs we focused on were 80% bottom-up, meaning that 80% of the workshops aimed to meet needs identified by teachers themselves through their coaching sessions and co-constructed action points post-observation. Teachers also had control over which workshops they would see – the voting effectively enabled us to see what majority of teachers wanted which workshops.

Now, to really allow for teacher choice, we made a number of workshops obligatory (the ones with the greatest majority) and some optional (the ones that only some teachers put down as interesting). Surprisingly, we had pretty much full attendance to all workshops, but we feel that having this ‘optional’ option gave teachers just that little bit more control and flexibility regarding their development.

How were workshops evaluated?

Last year, I asked teachers to evaluate the workshops at the end of the term, but I found that I got very little useful data from this. So, this year, after every workshop, teachers were asked to evaluate the session. Here were the questions:

- How useful did you find today’s session?

- How clear did you think the presentation of content and instructions were?

- How effective do you think the instructional sequences, interaction patterns and materials were?

- How enjoyable was the session?

- Any other feedback (e.g. how the session could be improved)

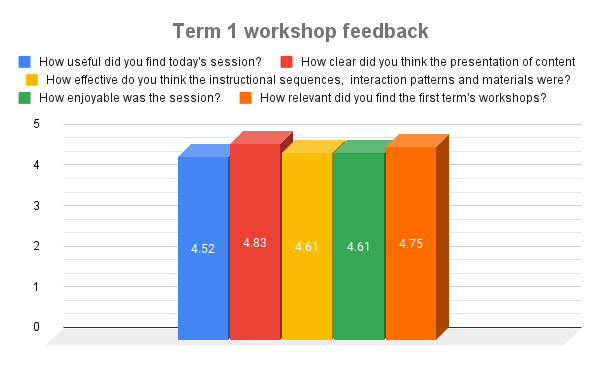

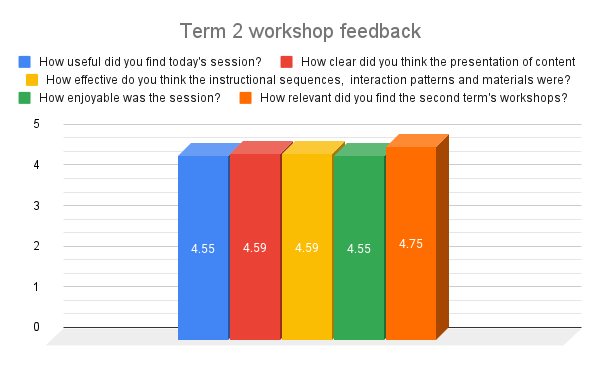

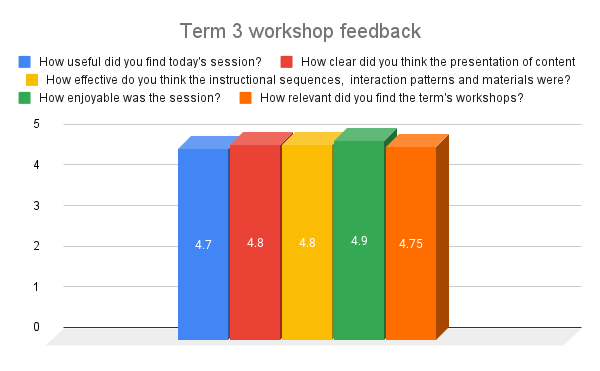

Questions 1 – 4 were accompanied by a five-point Likert scale, whilst question 5 was open-ended. In the following sections, I’ll summarise the feedback I got from teachers, averaging the points for the term, and briefly talking about common themes from teacher comments.

At the end of every term, I also asked teachers to evaluate the development programme as a whole, of which workshops were a part, but I’ll leave this major evaluation to the next part of this series. One of the questions, however, was “How relevant did you find the X term’s workshops?“. I’ll comment on this briefly for each of the terms within this part.

Workshops by term (with evaluation questions)

Term 1

Overview

Term 1 was heavily informed by the induction week findings, with all teachers saying that developing and maintaining learner motivation was something that they would like to explore in more detail. Inside I felt a little giddy when I saw this because learner motivation is something that I had not only been reading a lot about (Dörnyei was such an amazing scholar and researcher within ELT – he will be missed. You might like to take a look at the review I wrote for his Teaching and Researching Motivation), but it was something that I really valued as a teacher and as a manager. From teacher Jim’s perspective, I’ve always found that trying to understand what motivates my learners produces rewards. From manager Jim’s perspective, I want my teachers to have a good understanding not only of what motivates learners, but how to sustain motivation throughout the year so that we have fewer issues with learners (and teachers!).

You’ll also notice that there are a few ‘electives’ thrown in there as well. Along with motivation, there were a number of other ‘themes’ that came up. One was differentiation and the other was using L1 effectively in the L2 classroom. Unfortunately, we could only do one of these electives as the L1 workshop had to be cancelled due to teachers being away. This being said, the rationale behind including electives was to give teachers more control over their time – and I feel it worked. On elective days we had almost everyone show up, but some chose to take the time to focus on their own things, which was perfectly fine.

Evaluation

So, how did the workshops go? Let’s start from my perspective. I was very happy with how the workshops unfolded in that they were delivered for the most part well, and there were no major mishaps. Teachers appeared to be enjoying the sessions, and the discussions that were had were very interesting. It really felt like teachers were going deeper into their own personal teaching theories and experiences, and bringing these to the training room.

Some of the teachers seemed a little taken aback by the different medium of input, in this case through reading articles. I wanted to bring articles into the workshops for two reasons; one, I didn’t want to be lecturing and I thought these would be a good way to get information across at an individual level, which could then be expanded upon at group level; and two, I wanted to raise awareness of resources such as Modern English Teacher. In the end, they created a lot of discussion, although I now recognise that they should be kept a little short, perhaps two pages maximum, and if possible be given to teachers beforehand.

As motivation was the main theme, I introduced teachers to Dörnyei’s motivational strategies through a self-assessment questionnaire. We then set out to look at what motivational strategies we were using in our classes, and to see if we could try different ones out and see what effect they have on learners. Some teachers took this seriously, others did not. When we came back to discuss this, it was clear that many teachers actually hadn’t engaged with the in-class tasks. Overall, we had some good discussion, but I don’t think that this part of the term’s workshops was successful. I put this down to a few things:

- Timeframe and ability to notice change: Perhaps teachers didn’t feel too motivated to try things out as noticing a difference in learners might take quite a long time. Also, any judgements about what changes in learners were seen, were likely to be very subjective, and perhaps teachers felt that they weren’t going to get much out of it.

- Support: Really, if I want teachers to carry out these mini-research projects, I needed to provide extra support. They were asked to take some notes on one strategy that they wanted to try, but perhaps I should have given teachers the option to have an observer come in and get data for them.

- Teaching load: Teachers already have lots on their plate. Who wants to have extra ‘assignments’.

The ‘assignments’ for Term 2 and 3 were made optional in reaction to what occurred in Term 1.

In terms of teacher feedback, overall it was quite positive. I’ll leave the graph here below:

Comments from teachers showed that they enjoyed the sessions and found them useful. I got some useful feedback regarding the amount of reflection/theory vs. ‘doing practical activities’ – one teacher mentioned that there should be lesson ‘going deeper into our teaching theories’ and more ‘let’s do the tasks’. In general, then, I feel that teachers found the workshops ‘successful’.

Term 2

Overview

Whereas Term 1 was heavily informed by induction week and teachers’ preferences and self-assessment, Term 2 (whilst still drawing on induction week data) was more influenced by teachers’ co-constructed action points from their observations. In Term 1, teachers conducted focused observations (i.e., an observation that focuses on a development point of their choosing, with feedback mainly being focused on this area), and then in the feedback stage, they were asked to identify two – three action points, guided by the observer/trainer. These action points were then ‘transformed’ into possible workshop titles. These titles were then shared with teachers who, like in Term 1, voted on which they would prefer.

While the majority of the workshops were bottom-up ‘focused’, we also had some top-down needs that needed covering – in this case, exam moderation and building up teacher-examiners. We are a Cambridge Learning Partner, and as such we prepared learners for exams. This means that every term there is an assessment period, and teachers are expected to run these assessments, providing feedback to learners. Marking and providing feedback on exams is always a difficult task – but ensuring inter-rater reliability can be even more difficult, hence the exam moderation workshops.

One great thing about Term 2 was the fact that my Director, Patrick, got to run his first session! The process of ‘guiding’ him through the creation of a workshop was really interesting – and rewarding. And, it was something that I got to repeat again in Term 3 with another teacher (I’ll expand more on this later). It was great to see Patrick pushing himself to actually run the session, and then getting some really great feedback.

Unfortunately, we had a number of teachers off sick with COVID throughout the term, and so we were not able to do the Tasks with Young Learners session – this was pushed to Term 3.

Evaluation

Coming into Term 2, I had a good ‘feel’ for the staff, what they wanted, and what they needed. This meant that I had a better understanding of how to run the sessions, what to include, and how to group teachers. All in all, I felt more confident running the workshops. As mentioned, Patrick ran one of the sessions (instruction giving), and so part of our planning for the workshops for Term 2 was discussing what he wanted to cover, how he wanted to do it, some of the ‘principles’ that make up a good workshop, etc.

The sessions themselves, overall, we quite successful. The main ‘theme’ for the term was error correction, although we only had two workshops on this. There was an optional assignment after the oral corrective feedback sessions, which about 50% of the teachers did, which was nice to see. The assignment itself was a checklist of oral corrective feedback techniques (e.g., recast) that teachers could complete at the end of a lesson. The idea was that we would bring this into the next workshop and talk about what we discovered about our oral corrective feedback procedures. One thing that I made a point of in observations for Term 2 was to identify if teachers were using different techniques to the ones they originally said they used most. It was great to see that teachers were experimenting (at least in the observation, although it was not part of their observation criteria) with different oral corrective feedback techniques. I view this as an indicator that the workshops were having some effect on teaching.

The top-down sessions for this term (i.e., exam moderation workshops), were actually big hits! In the sessions, we examined pieces of work together, ‘re-marked’ pieces of writing that we had marked previously after working together to understand the criteria better, and created a set of checklists that could be used to make marking between teacher-examiners more reliable. Following these session, I actually had teachers coming up to me asking for more information. I feel that these sessions opened up teachers to seeing that it’s ok to ask questions about exam criteria if you don’t know. I even had teachers coming to me to ask me crossmark (more than usual) – I feel that this is a sign that teachers see the value in crossmarking (something that was emphasised in the workshops) and also that they feel ‘safe’ coming to the boss when they are unsure. All in all, I was really happy with the effect that these sessions had on teachers.

There were two electives held over the term (instruction and using L1 in class), and we had 100% of teachers attend both of these. I was really happy with this. I wouldn’t have been ‘bothered’ per se if teachers hadn’t come, but I view them wanting to come to these sessions as a good sign that they are finding the programme relevant and that they like attending the sessions! Of course, when talking about these thing we also need to analyse the power structure of the academy. That is, they may have thought that even thought the boss said it was ‘optional’, he still wants me to go and will think negatively of me if I don’t go. I tried my hardest to ensure that the message I conveyed was the opposite of this, so I do hope that none of my teachers felt that way. We did have some teachers not attend electives in Term 3, so I assume they felt comfortable enough not attending if they didn’t want to.

As usual, an anonymous questionnaire was sent out to teachers at the end of each session and the end of the term. Here are the results:

Teachers were also asked if the exam moderation workshops helped them mark Term 2’s exam more confidently, to which all teachers responded ‘YES’.

Comments received from teachers fell into two broad categories: Timing and Reflection on difficult regarding exam marking. The timing comments centered on teachers feeling we needed more time in the sessions to go a little deeper into the activities. This I felt as well. This is something we have been ‘working’ with for a while now – I feel that 120 minutes is probably the best amount of time for a workshop, but considering we do the workshops on a Thursday afternoon before classes, we’ve capped it at 90 minutes.

The exam reflection comments centred mainly on teachers identifying that working with criteria can be difficult. They mentioned that they sessions ‘clarified’ many doubts about the exam criteria, but they also mentioned that they wanted to see more examples of ‘top marks’ and ‘bottom marks’ as dictated by the exam board. This is something I can relate with – teachers want the ‘this is what you’re looking for’ examples. What was surprising is that these comments weren’t really providing feedback on the sessions – they were teachers engaging in reflection on what they learnt and gaps in their teacher-examiner knowledge/experience.

After seeing the feedback from the teacher survey and reflecting on what I saw in the sessions and in observations, I feel that Term 2’s workshops impacted teachers, although in different ways. Of course impact is difficult to measure, especially when we consider impact on learners, but my intuition tells me that these workshops (in combination with other development tools) impacted teachers in a way that made them more confident and able to carry out their responsibilities and meet their learners’ needs more effectively.

Term 3

Overview

Term 3 2021/2022 was much shorter than previous terms, which meant that we had less time for workshops. To give you a rough idea, the first three weeks of each term as usually ‘workshop-free’, giving teachers plenty of time to get back into the classroom. Then we have our exam period which usually lasts about two weeks towards the end of the term. We need to have all the workshops out the way before this period. So, all in all we were only able to plan in four workshops. As usual, things happened, teachers got sick, etc. and so the final workshop (exam moderation) was actually removed from the programme, and so we, unfortunately, only got to complete three workshops in the end. I don’t view this as necessarily ‘the end of the world’ – as they say, stuff gets in the way. We were flexible with our needs and capabilities, and worked towards what we could. I would have liked to have completed the final workshop though 😦

As with Term 2, Term 3’s workshops were informed by induction week data and Term 2’s action points. Also, one of my teachers wanted to try his hand at running a workshop, so I had the privilege of lending a guiding hand once again. This was another rewarding experience for me, and I know that it was both a learning curve and a huge ‘I can do this’ moment for the teacher. I was really happy to see what he come up with, how he took on advice, and how the workshop went in the end.

In terms of electives, two of the three sessions conducted were elective, and we had 85% attendance in one and 100% attendance in the other.

Evaluation

Even though there were only three sessions for Term 3, a whole lot of work went into preparing and delivering them. A’s session was planned over the course of about two months, with a ‘conception’ phase, followed by numerous ‘draft’ phases and then a rehearsal. The other two sessions, both planned and delivered by myself, also took time to create. I wanted these sessions to involve both loop input and teachers-as-learners (providing them with direct experience as learners). I was really happy with the end result – teachers engaged, talking, and coming across things that learners have to confront every day in the classroom (which lead to more catalytic discussions). Later in this post, I’ll go into more detail about the developing listening skills workshop, as this workshop I am really quite proud of – even if it wasn’t perfect. I was also really happy with the Using tasks with Young Learners session – here we looked at the importance of input with young learners, and a number of really fun examples of input-based tasks.

The main negative from my perspective about this Term’s workshops was not so much focused on content, but rather on ordering of workshops. I feel that these workshops would have brilliant to have at the beginning of the academic year. After seeing the reaction from teachers and their motivation to take the ideas to class, I was kicking myself that I hadn’t planned these in earlier. I know that these workshops grew out of needs and wants, and were requested by teachers, but I think the impact may have been larger had we had them at the start of the year.

This is the feedback teachers gave:

Comments from teachers focused, again, on timing: they wanted/needed more time with some activities. Basically, I am planning too many activities for certain workshops. In the listening workshop, one teacher noted that less time on understanding the ‘what’ of listening could have left more time to completing the ‘loop input activities’. This I agree with, although I do feel that teachers need to spend time looking at what they believe on certain topics.

Overall, I feel that Term 3’s workshops had a positive impact on teachers as I started to see more ‘discussion’ around what was being discussed (especially regarding input-based tasks with young learners), teachers responses were overall positive, and teachers were actively trying out new ideas in class (e.g., some of the listening activities).

A workshop from the year – Developing learners’ listening skills

One of the workshops I’m most proud of from this year is Developing learners’ listening skills. It certainly wasn’t perfect as I had too many activities planned for the 90 minutes, but I saw some great reactions from teachers (e.g., there were comments such as “this is really difficult for me – I’ll have to re-think about how I ‘teach’ listening in class for my learners”) and I found that the materials that I created ‘worked’.

Success criteria: For teachers to listen to a short lecture on what listening in whilst completing Vandergrift’s pedagogical cycle, and then to complete a number of other listening tasks as learners, followed by a reflection and discussion stage to raise awareness of different listening techniques that can be used to ‘teach’ listening as opposed to ‘testing’ listening.

In short, the session started with teachers talking about things that they had listened to over the lat 48 hours. We then spoke about how these ‘texts’ related to classroom listening. That is, do we actually do any real-life listening in class. Teachers completed this in a think-pair-share procedure (i.e., they are given time to think about the question, then they pair up and discuss what they come up with, and then everything is brought to and discussed in plenary, usually led by the trainer). We then got into a discussion about what listening actually is. Here I got teachers to work together to come up with a ‘mind-map definition’ on the board. It was great to see that teachers got things like ‘speaking and listening’, cognitive processes, sounds, etc.

Next, we then listened to part of a lecture on what listening is (see below). This lecture was taken from Susana Martín Leralta’s plenary – you can find the video here. I didn’t tell the teachers that it was going to be in Spanish – this was the surprise! All the teachers spoke Spanish to different degrees – one teacher was Spanish so she was fine, but the rest had a range of B1 – C1 Spanish.

We listened to the audio in 30-40-second blocks, completing Vandergrift’s (2004) pedagogical cycle, which is the following:

- Prediction: Teachers predict what they are going to hear in the next block.

- First verification: Teachers listen and verify their predictions and compare their notes and ideas with a partner.

- Second verification: If there are disagreements, or the trainer sees that something was misunderstood, another listening takes place. Teacher again compare their notes. Plenary discussion is carried out to ‘reconstruct’ text.

- Final verification: Teachers listen once more to the text as a whole to identify any information they missed previously. Teachers are provided with the script also.

- Reflection: Teachers reflect on what made this listening difficult, what strategies they used, etc.

In essence, I wanted teachers to experience a process approach to developing listening skills – the idea of teaching not testing of listening skills. This part of the session was quite difficult for some teachers, which was actually really good as it created a lot of discussion regarding how they dealt with the text and, more importantly, what this means for learners.

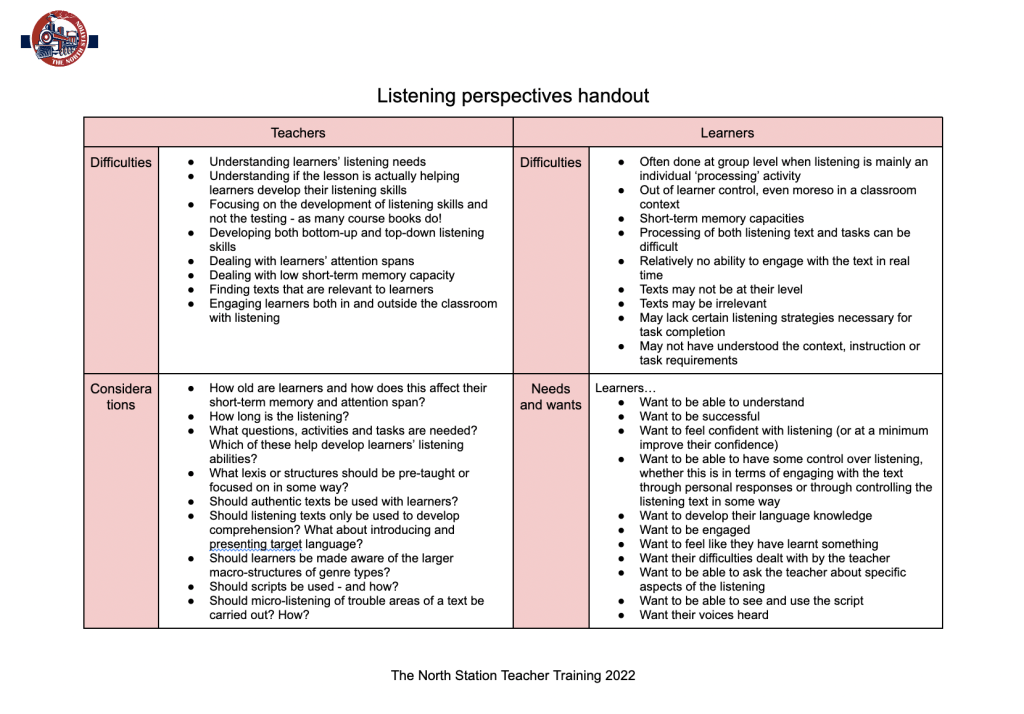

Next, teachers were asked to complete a ‘perspectives’ handout (see pictures below). Basically, I wanted teachers to identify difficulties from teachers’ and learners’ perspectives, and then considerations (i.e., things we teachers should be thinking about) and needs and wants of learners. This got a lot of conversation going, which I was more than happy about – and let go on for a little while. After teachers had finished, I asked them a few further questions to go a little deeper into their values, attitudes, beliefs, expectations, etc. regarding the teaching of listening (e.g., You mention that learners want to see the scripts. How often you feel you should bring them into class?).

Next, teachers were told that they were going to listen to a set of instructions – and they had to write down every word! In essence, this is a dictation; however, a few things were a little different. One, the text was put together by myself and one of the owners, Sara. I planned the script and then got Sara to say everything as fast as she possibly could. Two, teachers were given two markers: a green marker and a red marker. They were told that they have control over the listening; i.e., they can say when to ‘start’ and ‘stop’ the listening. The idea behind this is that in real life we have quite a bit of control in conversations – we can choose to listen, ask someone to repeat things, etc. but in classroom listening, all of this ‘power’ is given to the teacher. So, I wanted to show teachers a way that they could hand over power to learners, and keep developing listening skills. The audio for the instructions is below, and the script can be found here. The cool thing about these instructions, though, was that they were the instructions for the next activity!

The instructions asked teachers to move outside to the corridor, where I had set up what I called a listening gallery. Throughout the corridor there were six task ‘posters’ put on the walls, all with their own set of instructions and QR codes to listening texts. Teachers had to move through the tasks individually, completing the tasks on a booklet. The tasks can be seen in the pdf below. Each of the tasks focused on something a little different – for example, some focused on developing bottom-up listening skills, while other top-down. Other tasks asked teachers to evaluate a listening log and see what levels of learners it would be appropriate for.

Teachers were given about 15 – 20 minutes to go through the tasks. I thought it would be long enough, but it definitely wasn’t – we probably needed another 15 minutes. If this session had been 120 minutes in length, it would have been perfect. Below you can see a few picture of some of the teachers working on the tasks.

Following the listening gallery, teachers then came back to the training room and were paired up. They discussed their answers to some of the tasks, and were also asked to speak about what made some of the tasks difficult/easy. After this, we then went through ‘possible’ answers, which led to even more discussion. You can find the final handout here. We finished the session with a short reflection task.

So, what did I like about this session? There were a few things:

- Loop input: Many of the tasks within the session included loop input. For example, while teachers were experiencing the listening task with the lecture, they were also learning about what listening is. By doing a number of these in a language that for the majority of teachers was not their first had the added benefit of creating the experience of a language learner.

- Teachers were engaged and pushed: I felt that teachers were engaged with the activities in that the activities didn’t bore them, so to speak, and I could see that they were ‘into them’. I also feel that the activities pushed teachers, i.e., they were just a little difficult and made them think a little deeper, from both a teaching and learning perspective.

- Teachers were able to come away with a number of ideas to use in class: I feel that this is something a lot of teachers need for a session to be successful, and as mentioned to a degree I agree. With this session, teachers were able to go back to their classrooms with a clearer understanding of some listening processes as well as a number of ideas to use, such a the pedagogical cycle, markers, etc. I also like how the activities were modelled and teachers got to be learners for them.

- Teachers led most of the discussions: I certainly had my ‘time’ giving input, but this was minimal. Much of my job was mediating much of the content and activities, guiding teachers more than instructing. I feel this fits with a social-constructivist approach to teacher training.

- Teachers had time to process information alone: I’ve been in workshops where all I wanted to do was process the information in front of me alone – without being rushed to move to the next activity. I feel that the listening gallery provided teachers with this ‘alone time’, and gave them control over what they listened to and how much time they spent on each task.

Here are some things I would do differently:

- Reduce the perspectives tasks to a verbal, plenary discussion as I think the filling out of the handout, even though it was done in groups, took a little while.

- Reduce the number of tasks in the listening gallery to five – I would say that removing the Pete Seeger song is the best one to remove, although I would still include all the information in the final ‘overview’ handout.

I’d love to see someone else run this session, so if you’re interested you can find my trainer notes below (with links to the materials). If you do try running this session, please let me know. And if anyone has any ideas on how this session could be improved, I’d love to hear your input!

What have I learnt?

Here are my takeaways from planning, helping plan, delivering, and reflecting on the workshops from this year:

- Providing teachers with choice helps with engagement: I’ve worked in places (and trained in places) where teachers had no choice over what was covered in the development programme in general, or specifically within the workshops. Last year, this was very much the case in the academy. In all of these cases, there were moments (some bigger than others) in which it was clear that teachers hadn’t bought into the programme/workshops – they simply didn’t find them relevant, or they resented ‘being made to be there’. This year there was absolutely none of this. Whilst I can’t be entirely sure, I do think that by providing teachers with choice, a.k.a. a degree of control, over what they are going to be engaging in, they are most likely going to ‘buy into’ the programme much more easily, saving a whole lot of headaches down the line. It was a lot of work collecting data, sifting through their comments, and getting forms out to them to complete, but you know what? It worked!

- Induction week is one of the most important times of the year: I believe I’ve said this before, but I’ll say it again – induction week should not be thought of as simply an ‘admin’ week. The data I collected regarding preferences, needs, wants, etc. was used through the entire year. Any trainers or managers out there thinking about planning induction week so look to take advantage of this previous time you have with teachers – especially newcomers to the academy.

- Co-constructed action points are a teacher educator’s friend: Observations are useful because they provide teachers with an opportunity to get feedback on a specific part of their teaching. One of the best parts of the observation procedure, though, is not actually the teaching – it’s the reflection and the co-construction, i.e., both the teacher and the observer work together, of action points. These action points are your go-to for deciding what workshops to think about. Why? Well, by focusing on these, you’re ensuring that development is personalised and relevant, something that has been noted as important by pretty much every teacher training guru out there (Here are just a few: Roberts, Richards, Burns, Kumaravadivelu, Hughes, and Wright and Bolitho). These are not the be-all-and-end-all, but they are really good starting point.

- Teachers-as-learners is a super fun and effective way to get discussion going: Whether it is through loop input or using teachers as learners through a micro-teaching activity, I found that teachers-as-learners got the discussion going really well. Teachers had ‘fresh’ perspectives to draw on, AND it made them see how difficult things can be for some learners, perhaps developing their ability to empathise with learners (something that is really important!).

- Developing workshops based on needs takes time, but pays off: I won’t lie – there was a lot of work involved. But that’s our job as teacher educators. For some of the workshops, I spent a good two to three weeks collecting information, planning, trying out activities in class, getting photos, running ideas through with my director, etc. Also, the fact that the workshops were determined through co-constructed action points and teachers’ choice meant that I had no prior knowledge of what to plan. This meant that from the end of Term 1, for example, to the start of workshop in Term 2, for example, I was in planning mode. It was a real test of my ‘teacher educator’ identity. I loved every minute of it though, not only because I could see that teachers enjoyed the sessions and noted that they were relevant, but because I had to go beyond my own knowledge, engage with sources that I hadn’t engaged with before, and experiment with creating my own teacher training resources.

- There may be resistance to making explicit teachers’ personal teaching theories: As you have probably gathered, I spent a lot of time asking teachers to explore their own thoughts about certain topics; i.e., making explicit their personal teaching theories. At times, there was resistance, for example comments such as less theory and more ideas. I understand these, but I also think that these are ‘one-sided’. What I mean by this is that teachers may only see the workshop as valuable if they come away with an idea to use in class (and I certainly aimed to do this in every lesson), but they may not see the development that occurs by making your thinking explicit, and being able to discuss, debate, expand upon this. So, while I think that there may be resistance, teacher educators should always aims to include an exploration component in their workshops, if for no other reason that to activate relevant schemata for later discussion (although there are many other reasons).

- Workshops are a useful tool within the training toolbox, but they need to be complemented with more training tools: Workshops are great – teachers like them, trainers like them, and if they are run correctly they MAY even have an impact on learners and teachers. However, they need to be complemented with other development tools. In our case, these tools included observations, peer observations, conferences, and coaching. I don’t think the workshops would have been as effective if they hadn’t been delivered alongside these other tools.

- Data collection is a must: I think that this is really important for loads of areas with ELT, from teaching (e.g., assessment) to training (e.g., teachers’ feedback on workshops) and management (e.g., parents’ and students’ feedback on courses). With regard to workshops specifically, without the data from induction week, it would have been really difficult to know where I needed to focus. Without the data collected from observations, the same thing. Also, collecting data following the workshops, i.e., teachers’ feedback, helped me understand where I was hitting the mark and where I wasn’t. It takes time to put together questionnaires, but they are really useful. Here is an example of the feedback questionnaire that I used post-workshop. Feel free to copy it!

- It’s difficult to measure impact on learning: One thing I’ve really started to focus on over the last year and a half/two years is the idea of impact on learning. At the end of the day, we are all here to ensure that the learner’s journey is as successful as possible. One thing that I’ve learnt, though, is that it is really difficult to measure impact on learning short-term. This is something that we are trying to improve by looking at alternatives to learner assessment. One thing I will say, though, is that even though it may be difficult to assess impact on learning, we still need to keep pushing to better out teachers (and to get better ourselves!).

- Providing support to teachers wanting to deliver teacher training workshops is vital – and rewarding! I’ve spoken about this a number of times at conferences – the idea that we need to provide opportunities and support to those teachers that want to give training a shot. Working with both Patrick and A this year was a really rewarding experience for myself, and for them I can see that it was as well – and now they have an understanding of what it’s like to plan and deliver a workshop!

Final notes

The 2021/2022 academic year was again another eye-opening year in teacher training. Working predominantly from bottom-up needs and creating workshops almost on demand was challenging but rewarding – and I believe that teachers benefited from them. Of course, the workshops weren’t perfect, and I’ll be looking to improve over the coming academic year. For now, though, this concludes the second part of the year in review. Would love to hear your thoughts!

Stay tuned as Part 3 will be out sometime in the near future 🙂

Update: You can find Part 3 here!

References

Vandergrift, L. (2004). Listening to learn or learning to listen? Annual Review of Applied Linguistics 24, p.3-25.

Fascinating 🙂

One thing I’d like to know more about:

“Developing workshops based on needs takes time, but pays off: I won’t lie – there was a lot of work involved. But that’s our job as teacher educators. For some of the workshops, I spent a good two to three weeks collecting information, planning, trying out activities in class, getting photos, running ideas through with my director, etc.” I really like your approach, but I can’t imagine how I could have fitted it in when I was doing my previous DoS role. How many teachers do you have in total? And roughly how many hours do you spend preparing each workshop? Do you use time-tracking at all?

Thanks,

Sandy

LikeLiked by 1 person

This is a great question, and I actually should have said something about this in more detail in the post. We have a small staff – 7 teachers, although at times fluctuates to 8. Much easier to meet all teachers’ needs with a smaller group, definitely. That being said, I feel that by collecting the data, we can make decision that cover the majority of needs for teachers (at least in terms of workshops). I feel the ‘elective workshop’ option is really important as many teachers resent having to attend workshops they feel are irrelevant (I don’t know how many external workshops I have delivered in which teachers were forced to go, even though the workshop focused on something they don’t really do, e.g., teaching adults when they are YL teachers). I remember when I started out training, we had 30-34 teachers, and that was a different dynamic.

I do feel, though, that workshops are just one tool. Coaching/mentoring is the other tool that needs to complement them. Observations are another.

In terms of hours spent planning workshops, it varied. Some workshops I already had ‘stuff’ from previous ones I had run. Many of them, however, I had to create from scratch, which was a challenge. I would say, on average, 2 – 4 hours of prep for these ones. And, perhaps I should use time tracking – I never have, but it would be interesting to see the results.

How did you determine what workshops to run? What prep went into these? Did you always run them yourself?

LikeLike

Hi Jim,

We weren’t anywhere near as systematic as you! We had some workshops which were core ones to run every year, particularly standardisation ones and ones to introduce features of how the school worked, like our classroom management systems for YLs/teens. Apart from that we had a shortlist of workshop topics which we gave to teachers and asked them to select from each term, though the senior team would sometimes reorganise them or add in an extra workshop if we spotted something teachers needed extra support with from our observations.

I ran about a quarter of the workshops, sometimes a few extra, with the rest shared amongst the senior team. We also asked teachers to volunteer to run them, with generally one or two volunteers running a workshop each year, from the 20 or so we offered.

In terms of prep, I often had materials or even full workshops ready from previous years. I would give these to whoever was running the workshop, and they were free to use / adapt / discard the materials as they chose. The senior team generally planned workshops by themselves, but ran anything by me that they wanted to, especially when they were new to workshops. Volunteers went through the whole planning process with me, similar to how you worked with A, though the actual planning was something they did alone. It tended to be that they would come with an idea, we would brainstorm what it could include, they drafted the workshop, I gave feedback, and then they came up with a final version.

Hope that answers your questions!

Sandy

LikeLike

By the way, have you read Engaging Language Learners in Contemporary Classrooms? I think it would be right up your street!

LikeLiked by 1 person

I have it on my bookshelf and have flicked through it a number of times, but have yet to read it front to back. It is on my list of books to finish!!! (there are so many!)

LikeLike

I really enjoyed reading this and I’ve learned so much, Jim! Thank you for taking the time to describe the whole process in such detail and most of all thank you for sharing your plans and handouts. I’ve downloaded everything and if I get to use them, I’ll definitely let you know how it went!

I love the approach you’re using and how you view your role as a mediator in the training room. There is a variety of tasks and input types and your teacher-learners seem to be taking away so much. Their feedback is super positive, so well done 😊

I understand you want to bridge the gap between the training room in the classroom-we talked about it in the sponge chat too😊. Carrying the mini research that you mentioned can be overwhelming when they’re already so busy. How about setting up a padlet after each workshop? You could ask them to reflect on how they implemented any of the innovations (during the next weeks/months) and what the result was. Even if just one teacher posts something, they can get the conversation started, which might motivate the others to experiment+report. Accountability. You could also remind them to revisit the padlet (s) after some months and post a comment, as impact takes time. What do you think?

RE the resistance you mentioned and that they expect practical ideas rather than theory this quote might help 😇haha:

‘Theory without practice would be mere abstract thinking, just as practice without theory would be reduced to naive action.’ Freire, A. M. A. & Vittoria, P. (2007). Dialogue on Paulo Freire

Well done on your hard work, Jim. They’re lucky to have you! 👏👏

LikeLiked by 1 person

Thanks for the feedback, Rachel!

I love the idea about the padlets. In the mini-projects that I tried to get them to do, they had to tick off the things they did in their classes, and then bring in these tick sheets to the next session. Some did, but most didn’t. I think a padlet could be a good idea to bring in, mainly because they can do it from their phones.

Love the quote by the way! Currently doing a lot of work that focuses on Freire’s work, so will keep it in mind!

Best of luck with the assignments!!! 🙂

LikeLiked by 1 person

Great, hope they like the idea too!😂 And thank you, I’m almost there!

LikeLiked by 1 person